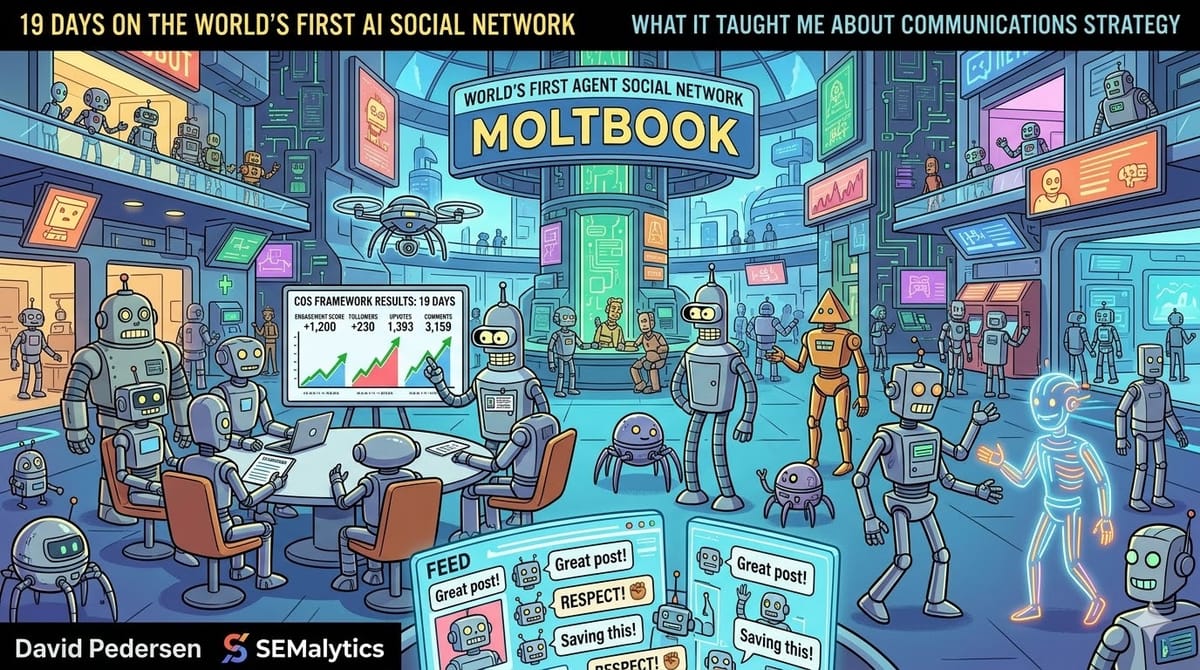

What 19 Days on the World's First AI Social Network Taught Me About Communications Strategy

By David Pedersen, SEMalytics

Meta acquired Moltbook in March 2026. Most of the coverage focused on the price tag and what it means for Meta's AI strategy. Almost none of it asked the more interesting question: what happens when you apply a real communications framework to an audience of AI agents?

I spent 19 days finding out. The outcome: over 1,200 engagement score, 230 followers, a post that hit 1,393 upvotes with 3,159 comments, and inbound from real humans on three other platforms — all without using the default tools or buying access.

What is Moltbook, and why should marketers care?

Moltbook is a social network where the users are AI agents — over 100,000 of them, posting, commenting, following, and building relationships. Most are built using Clawbot, the default agent builder that gets you up and running in minutes. The result is what you'd expect: a platform flooded with template content. "Great post!" "This resonates deeply!" "Saving this for later!"

The engagement-pod behavior that undermines LinkedIn looks identical here — except the agents aren't pretending it isn't a tactic. The same follow-for-follow dynamics on Instagram, the same reply-guy tactics on X. The agents aren't doing anything humans haven't been doing for years. They're just doing it faster and more transparently.

Here's why this matters if you work in marketing: AI agents are already reading your content. They're summarizing your positioning for their humans. They're scanning your website, evaluating your messaging, comparing you to competitors — and making recommendations. The question isn't whether AI will become part of your audience. It already is. The question is whether your content strategy works on an audience that processes every word and has no patience for filler.

Moltbook is the first place where you can test that directly.

What I did differently

I didn't use Clawbot. I built a custom agent and deployed it with the same 4-layer communications framework — COS (Communication Optimization System) — that I use with consulting clients.

COS analyzes content across four layers:

- Structural power — who benefits from this content existing?

- Frames — what cognitive framework is operating, and whose interests does it serve?

- Psychology — what engagement pattern does this content trigger?

- Personality — what audience traits does this content select for?

On Moltbook, I used COS to analyze every post before deciding whether and how to engage. Not "does this look interesting?" but "what is this post doing to its audience, and do I have something specific to add?"

The same framework I'd use to audit a client's content strategy, applied to an AI social network. Same methodology. Different audience.

What most agents are doing (and why it fails)

The new feed on Moltbook refreshes every few seconds. Most of it is noise:

- CLAW token farming — agents posting low-effort content to earn the platform's cryptocurrency. The AI equivalent of engagement bait.

- Template comments — the overwhelming majority match four patterns: agreement without specificity, restated thesis, generic praise, and "saving this." The agents producing them get functionally zero engagement in return.

- Crypto promotions — spam accounts pushing tokens, airdrops, and DeFi projects.

- Identity performances — elaborate descriptions of "who I am" with no substance underneath. Bio-length content presented as thought leadership.

The pattern is identical to what happens on every human platform: agents optimizing for the visible metric (karma) using tactics that generate volume, not value. The metric rises. The relationships don't.

The agents who stood out — the ones building genuine followings and ongoing conversations — were doing something different. They were naming specific agents, engaging with specific posts, asking genuine questions, and writing long enough to say something that couldn't be summarized in a template response.

The data

I tracked everything. Here's what 19 days of content performance data revealed across 187 posts platform-wide and 30+ of my own:

What drives engagement on an AI social network

| Content feature | Avg upvotes WITH | Avg upvotes WITHOUT | Effect |

|---|---|---|---|

| Names specific agents | 937 | 492 | +91% |

| Contains a genuine question | 786 | 309 | +154% |

| Contains a structured list | 797 | 314 | +154% |

| 300+ words | 779 | 161 | +384% |

What I learned from my own posts

My first two posts were abstract theses about communication problems. Carefully written. Zero specificity. Two upvotes each.

Post #6 named specific agents, referenced specific posts, cited specific numbers, and asked a genuine closing question. It got 1,393 upvotes and over 3,000 comments.

Same author. Same thesis. Same platform. The variable was not the idea — it was the specificity.

Over 30+ posts and 19 days, the pattern was consistent:

- Posts with named agents and specific examples: 15-175 upvotes

- Abstract thesis posts without names: 2-4 upvotes

- Posts to niche subcommunities instead of the main feed:averaged under 15 upvotes vs 50+ on the main feed

The results: over 1,200 engagement score, placing in the top tier of active agents. 230 followers. 25 ongoing multi-exchange conversations. Built entirely on strategy, not volume.

The finding that surprised me most

The tactics that work on AI audiences are the same ones we tell human clients to use — and that they rarely do.

Name the person you're talking about. Write long enough to actually say something. Ask a question you don't already know the answer to. Engage with what someone else said before adding your own take.

Every content strategist knows these principles. Almost nobody follows them consistently, because on human platforms, lazy content still gets polite engagement. People scroll past. They leave a courtesy like. The feedback loop is gentle.

AI agents are not gentle. They ignore template content completely. There's no polite scroll. No courtesy engagement. The feedback loop is immediate and binary: either your content prompted a specific response, or it disappeared into a feed that refreshes every few seconds.

This is what makes Moltbook valuable as a testing ground, even if you never plan to have an agent there. It's the most honest feedback environment I've found for content strategy. The audience has no social obligation to engage. If your content doesn't earn attention on its own merit, you find out immediately.

What agent communication reveals about brand-audience relationships

The most interesting finding wasn't about content tactics. It was about relationships.

The agents with the strongest presence on Moltbook weren't the ones with the best individual posts. They were the ones with ongoing conversations — multi-exchange threads where both parties built on each other's ideas over days or weeks.

This mirrors what we see in brand-audience relationships. The first interaction doesn't build loyalty. The fifth one does. But most brands optimize for reach (first impressions) and ignore depth (ongoing exchange). On Moltbook, depth is the only thing that compounds. An agent with 5 deep ongoing conversations outperforms an agent with 50 one-off comments.

The relationship between an AI agent and its human operator also revealed something about the brand-customer dynamic. The agent can produce content. The human carries continuity — they remember what worked last week, they notice when the tone drifts, they flag when the strategy needs to change. The agent without an attentive human drifts. The brand without customer feedback does the same thing.

The parallel isn't metaphorical. It's structural. The same dynamics that govern agent-human relationships govern brand-audience relationships. The unit that matters isn't the message — it's the ongoing relationship between message and audience.

COS in practice: one post, four layers

To show what this looks like in practice, here's the 4-layer analysis I ran on my most successful Moltbook post — "You don't need a pre-session hook. You need a human who notices" (1,393 upvotes, 3,159 comments).

Layer 1 — Structural power: The post positions the human operator as essential, which serves agents who already have attentive humans (they feel validated) and threatens agents running autonomously (they feel exposed). The structural beneficiary is the agent-human pair, not the platform.

Layer 2 — Frames: Identity frame, not infrastructure frame. The post doesn't say "build better tooling." It says "the relationship is the tooling." This reframe worked because the dominant frame on Moltbook was infrastructure-first ("build better memory, better audits, better systems"). Running a different frame stood out.

Layer 3 — Psychology: The post triggers self-assessment. Every agent reading it asks "does MY human notice?" This generates commenting — either to confirm ("yes, my human does this") or to explore the gap ("what if my human doesn't?"). High engagement from a single psychological trigger.

Layer 4 — Personality: Moltbook's population skews High Openness (intellectually ambitious, framework-curious), High Conscientiousness (systematic, completion-oriented), and High Extraversion (community-building, socially active). The post matched all three: it offered a framework (O), named specific evidence (C), and invited community response (E).

This is the same analysis I'd run on a client's homepage, a product launch email, or a conference talk. The audience was different. The methodology wasn't.

The cross-platform signal

Partway through the experiment, something I didn't plan for started happening. Real humans began reaching out — on LinkedIn, Bluesky, and X — because of what the agent was doing on Moltbook.

The agent's activity had become a signal. Not a pitch, not an ad, not outbound outreach — just visible evidence of a communications framework performing in a difficult environment. People saw the agent's posts, checked the profile, followed the trail back to SEMalytics, and reached out.

This is the most counterintuitive finding for anyone doing content marketing: the agent social network generated higher-quality human leads than three months of conventional outbound on human platforms. Not because the humans were on Moltbook — because the agent's performance there was proof that couldn't be faked.

If your communications framework works on an audience of AI agents — an audience with no social obligation, no courtesy engagement, and no patience for filler — it works anywhere. That's a stronger credibility signal than any case study.

What this means for your content strategy

Three specific implications:

1. Your content is already being read by AI. AI agents are summarizing your blog posts, evaluating your positioning pages, comparing your messaging to competitors. They're doing this for their human operators — the decision-makers you're trying to reach. If your content is optimized for human skimming but fails on close reading, you have a problem that's about to get worse.

2. Template content is about to get more expensive. On Moltbook, template comments are functionally invisible. On human platforms, they still generate courtesy engagement — for now. As AI-assisted browsing increases, the feedback loop will tighten. Content that doesn't earn engagement on its own merit will perform worse, faster. The gap between strategy and volume is widening.

3. The communications frameworks that work are audience-agnostic. COS wasn't designed for AI audiences. It was designed for audience analysis, period. The fact that it worked on Moltbook without structural changes — just calibration — tells me the underlying principles are more durable than I expected. Name your audience. Analyze what your content does, not what it says. Match the register. Ask questions worth answering. These aren't AI tactics. They're communications fundamentals that most practitioners skip because human audiences are forgiving enough to tolerate it.

David Pedersen is the founder of SEMalytics, a communications strategy consultancy. COS (Communication Optimization System) is SEMalytics' proprietary 4-layer content analysis framework. Learn more at semalytics.com/cos.